Recent industry research from RSM reports that 92 percent of middle-market executives face serious challenges with AI strategy implementation, and 62 percent find deploying generative AI harder than expected. For GCC and Saudi boards, this gap between ambition and readiness can very quickly turn an AI strategy implementation budget into sunk cost before a single model ships.

Effective AI Strategy Implementation in a Saudi or GCC enterprise starts long before a proof of concept or model build. The real pre-work is a set of board-level decisions about business outcomes, data, integration, governance, ownership, and the mix of embedded, configured, and custom AI. This article lays out six specific choices that should be locked before any vendor demo reaches your calendar.

This article is the pre-work. Six decisions, in the order they have to be made, before any vendor demo, any proof of concept, any line of training data.

Key Takeaways

Before we go deeper, here are the core ideas this article keeps coming back to.

- The six most commonly skipped pre-work decisions in AI Strategy Implementation center on business goals, not models or tools, and they quietly determine whether pilots ever reach scale.

- Business-first sequencing is the main driver of AI return on investment, while technology-first sequencing almost always locks in rework and vendor regret.

- Governance, data foundations, and integration readiness must be defined and funded before deployment, or operating risk and clean-up costs rise sharply.

- Enterprises aligned with Vision 2030 face greater exposure because timelines push leaders to sign AI contracts before their organizations are structurally ready.

Why Most AI Strategy Implementation Plans Fail Before Launch

The IBM Global AI Adoption Index has tracked the same gap for three years running: enterprise interest in AI keeps climbing, but only a minority of enterprises feel they have the data, talent, and governance in place to use it. McKinsey's 2025 State of AI survey shows that organizations capturing measurable EBIT impact from AI share three habits, and none of them are about the model.

The pattern is consistent. The teams that get returns invest 18 months in the foundation. The teams that lose money invest 18 months in the proof of concept and then spend the next 18 months on the foundation anyway, after a board has lost confidence.

Three failure modes show up first:

- Goal drift. "We want to do something with AI" is not a goal. It is a budget waiting for a vendor.

- Foundation skipping. The data is treated as a project task, not a precondition.

- Governance debt. Approval, monitoring, and risk ownership get postponed until something breaks.

If you fix those three, you are already ahead of most of the market.

Decision 1: Set the Goal First — What AI ROI for Enterprises Actually Looks Like

Before any architecture conversation, name the financial outcome. Not in language. In a number.

Enterprise AI ROI lives in three categories, and one project should belong to one of them — not all three at once.

- Cost reduction. A measurable line item that shrinks. Reduced cost-to-serve, lower cycle time on a finance close, and fewer FTE hours on document review.

- Revenue acceleration. A measurable line item that grows. Higher conversion on quoted deals, faster sales cycle, larger average contract value.

- Risk avoidance. A measurable exposure that drops. Reduced fraud loss, faster anomaly detection, and fewer compliance incidents.

The test for a real AI goal: it should fit on one line, with a starting figure and a target figure, and a finance person should be willing to sign off on the measurement method before the model is built.

If your AI use case cannot answer "from what to what, by when, and who measures it," you do not have a use case yet. You have a wish.

Decision 2: Audit the Data — The Foundation Beneath Any Artificial Intelligence Roadmap

Gartner has reported for years that the majority of organizations lack data management practices that are AI-ready. That figure does not move because most enterprises buy the AI tool before fixing the input.

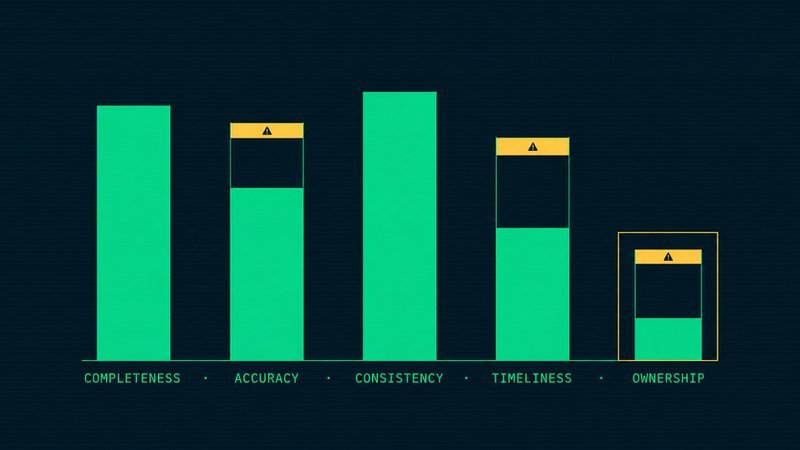

Run the audit before any vendor conversation. Five questions, in order:

- Completeness. What percentage of records in the source system have all the fields the model will rely on?

- Accuracy. When was the last time someone sampled this data against ground truth, and what was the error rate?

- Consistency. Are the same entities — customer, product, vendor — represented the same way in every system that touches them?

- Timeliness. When the model needs an answer, is the underlying data fresh enough for the answer to still be true?

- Ownership. Who is the named individual accountable when the data is wrong? Not a department. A person.

The fifth question is the one that fails most enterprises. If you cannot answer it, you do not have a data foundation. You have a queue of disputes waiting to surface during the pilot.

Decision 3: The Cultural Shift — The Quiet Half of AI Business Integration

AI changes who decides. That is the part most strategy decks skip.

When a model takes over a step a human used to perform — credit scoring, document classification, lead routing — the locus of judgment moves. Someone who used to make the call now reviews the model's call. The job changes. The accountability changes. The training changes.

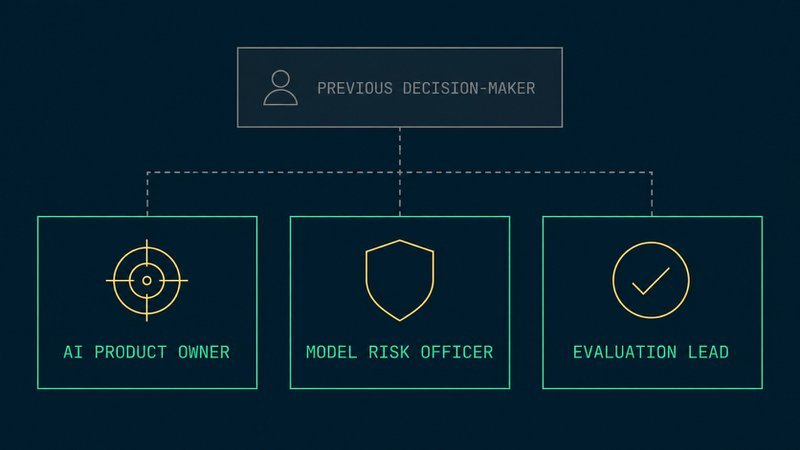

Three roles most GCC enterprises do not have yet, and need before deployment:

- AI product owner. Owns the use case end-to-end, sets the success metric, decides when the model is ready, and when it is rolled back.

- Model risk officer. Reviews model behavior across business units, owns the approval gate before any model goes live.

- Prompt and evaluation lead. Owns the test set, the evaluation framework, and the regression checks every time the model or its inputs change.

Cultural readiness is not training people to use a chatbot. It is naming the people who will say yes and no to AI in production.

Decision 4: Build vs Buy vs Partner — The Capability Decision

The most expensive decision in any AI strategy implementation is treating this question as a procurement exercise. It is a strategy exercise.

| Option | Use when | Risk |

|---|---|---|

| Build | The AI capability is core competitive IP — you cannot rent it without renting your moat. | Talent scarcity, 18–36 months to value. |

| Buy | The capability is a feature inside a workflow you already run — productivity, search, summarization. | Vendor lock-in, undifferentiated outcome. |

| Partner | The scarcity is in talent, not technology, and you need outcomes inside 90 days. | Knowledge transfer, IP boundaries. |

For most GCC enterprises in 2026, partner-first is the right answer for the first three use cases. Build can come later, after the organization has shipped something. Buy is fine for commodity capability. Partner is what closes the talent gap fastest, with the lowest risk of building a team you cannot retain.

The decision is not technology. It is honesty about which scarcity you are actually solving.

Decision 5: Sequence Your First Three Use Cases — A Scalable AI Framework

Pick the wrong first use case, and you spend a year defending the program. Pick the right one, and the second is already half-funded.

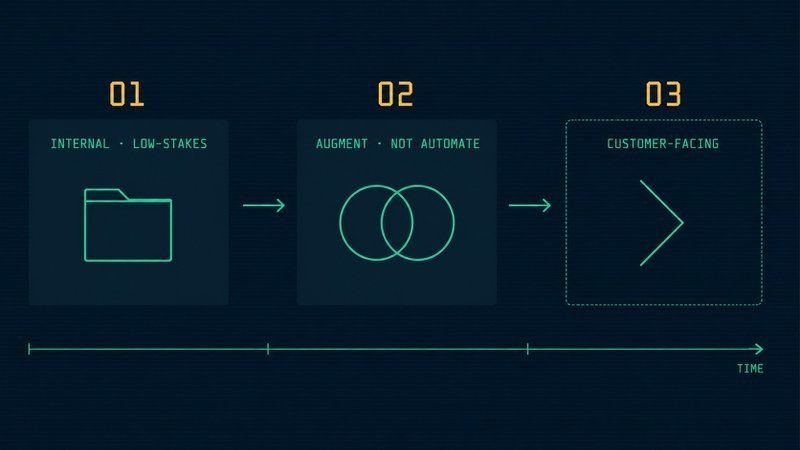

A sequence that works:

- Use case 1: Internal, low-stakes, high-tolerance. Knowledge retrieval inside a single department. Document processing on a known corpus. An internal search that does not face a customer. The goal is to ship something, learn the operational pattern, and build organizational confidence.

- Use case 2: Augment, not automate. A model that supports a decision that humans still make. Sales lead prioritization, supplier risk scoring, and contract review assistance. The model recommends. The person decides. Adoption depends on the person trusting the recommendation, which depends on the data foundation you built in Decision 2.

- Use case 3: Customer-facing, only after one and two shipments. Self-service support, personalized recommendations, intelligent routing. By this point, the organization has the governance, the evaluation framework, and the cultural muscle to ship safely.

Three use cases in this order build the operating system for everything after them. Three use cases in any other order build a portfolio of pilots.

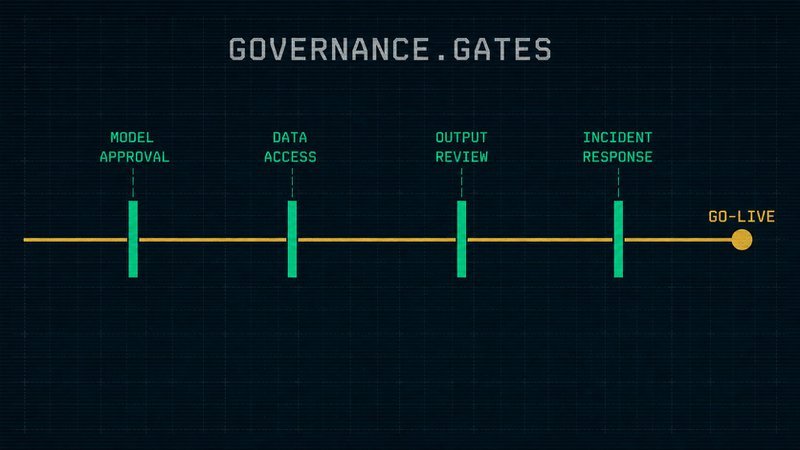

Decision 6: AI Governance — The Final Gate of AI Strategy Implementation

Governance written after a model is in production is not governance. It is forensics.

Four governance decisions belong in the strategy phase, not the launch phase:

- Model approval. Who signs off before any model serves a real user? Not "the team." A name and a role.

- Data access. Which datasets are the model allowed to see, and who is accountable for the answer when that scope changes?

- Output review. What is the sampling rate for human review of model outputs in the first 90 days? In the second 90 days?

- Incident response. When a model misbehaves, what is the kill switch, who flips it, and how is the rollback communicated to the business?

If those four questions cannot be answered in writing, the model is not ready. The strategy is not ready.

How Jood Alliance Accelerates AI Strategy Implementation in Saudi Enterprises

Jood Alliance was created in Saudi Arabia to close exactly these pre-work gaps for mid to large enterprises. The firm starts with Outcome-Driven Planning, a method that requires agreement on value, scope, stakeholders, and KPIs before any vendor or model is selected. That gives boards and CIOs a single, signed view of why AI Strategy Implementation exists at all and what success should look like in numbers.

From there, Jood Alliance develops a Target Architecture and Operating Model that spans cloud, ERP, data, AI, and industrial systems in one view. Partnerships with companies such as Mashura for ERP, ShebangLabs for cloud, Vimana for industrial IoT networks, and Wizr for Agentic AI sit inside that wider design instead of around it. This keeps multi-vendor complexity from quietly becoming the weak point of the entire program and helps internal teams see where their existing platforms already carry AI capability.

For Saudi enterprises working under Vision 2030 delivery dates, compressing time to first value without cutting corners on controls is the difference between an AI headline and an AI profit and loss statement that stands up in board and audit meetings.

Is Your Enterprise Actually Ready? The Five Readiness Dimensions Most Boards Overlook

Enterprise readiness for AI Strategy Implementation is not a feeling, it is a set of observable conditions across five dimensions. When boards test themselves against these areas, they quickly see whether AI investment belongs in this quarter or the next one. This matters even more in the GCC, where Vision 2030 programs link directly to national performance.

McKinsey reinforces this, finding that high-performing companies are about three times more likely to have an enterprise-wide AI strategy directly linked to their core corporate strategy. That gap between ambition and readiness is where most AI budgets quietly disappear.

In other words, organizational readiness multiplies the effect of technical investment — not the other way around. Here is a simple diagnostic view you can bring into a board or investment committee.

| Readiness Dimension | Core Question | Risk If Weak |

|---|---|---|

| Strategic Alignment | Does AI serve the enterprise North Star and sector strategy, or just a silo? | AI work delivers local wins while market share, margin, or service levels barely move. |

| Data Readiness | Is proprietary data clean, governed, and reachable for training and inference? | Models learn from noisy, biased, or incomplete data, which undermines trust in every output. |

| Integration Architecture | Can current cloud, ERP, and IoT setups carry AI traffic without rework? | Projects stall while teams refactor networks and interfaces under time pressure. |

| Governance And Controls | Are access, monitoring, and policy rules defined before pilots go live? | Audit findings, regulator concerns, and front line workarounds appear after launch. |

| Leadership Ownership | Is a CDO, CIO, or named executive accountable for outcomes, not activity? | AI remains a side project with no clear owner when tradeoffs or risks appear. |

For Saudi enterprises, a short internal review against these five lines can save months of confusion. Jood Alliance often uses this structure during its first workshops in Riyadh, Jeddah, or Dammam to decide whether the next step is architecture design, data clean-up, or leadership alignment.

To make this practical, boards can:

- Score each dimension on a simple 1–5 scale.

- Focus discussion on the two lowest-scoring areas.

- Link any major AI budget approval to a clear plan for closing those specific gaps.

The Pre-Build AI Strategy Implementation Checklist

Before signing the next vendor contract, the leadership team should be able to answer yes to all of the below:

- The use case has a named financial outcome with a starting and target figure

- A finance lead has signed off on the measurement method

- The data audit has been run on all source systems the model will rely on

- A named individual owns each input dataset

- AI product owner, model risk owner, and evaluation lead are named — not "to be hired"

- Build vs buy vs partner has been decided and written down with rationale

- First three use cases are sequenced and budgeted, not selected by department politics

- Model approval, data access, output review, and incident response are documented

- An executive sponsor has signed the governance document, not just attended the readout

- A 180-day success definition exists that the CFO and CTO both accept

If any line is unchecked, the strategy is not ready for procurement. It is ready for one more month of pre-work — and that month will pay for itself many times over.

The Right Question to Ask Before You Spend a Riyal

Most AI strategy implementation programs are judged by what shipped. The successful ones are decided by what was sequenced.

Before the next steering committee, ask one question: if we do nothing on AI for the next 90 days and instead fix the foundation, what is the cost of waiting versus the cost of shipping a model on a foundation we do not trust?

For most enterprises, the honest answer surprises the room.

Frequently Asked Questions

What is AI strategy implementation?

AI strategy implementation is the structured process of moving an enterprise from AI ambition to a deployed, governed, and measurable AI capability. It covers six pre-build decisions: defining the financial outcome, auditing data readiness, redistributing decision-making roles, choosing between build, buy, or partner, sequencing the first three use cases, and documenting governance before any model goes live.

Why do most enterprise AI strategies fail?

Most enterprise AI strategies fail before launch because three pre-build decisions get skipped: a measurable financial goal, an honest data-readiness audit, and named ownership for models and data. McKinsey's 2025State of AIand the IBM Global AI Adoption Index both find that only a minority of enterprises have the data, talent, and governance maturity in place to capture AI value at scale.

What should an enterprise do before implementing an AI strategy?

Before implementing an AI strategy, an enterprise should make six decisions in order: define the financial outcome in dollars, audit data quality on five dimensions, name three new AI roles (product owner, risk officer, evaluation lead), choose between build, buy, and partner, sequence the first three use cases from internal to customer-facing, and document AI governance gates before any vendor demo.

How do you measure AI ROI for enterprises?

Enterprise AI ROI is measured in one of three categories: cost reduction, revenue acceleration, or risk avoidance. A real AI use case names a starting figure, a target figure, a deadline, and a finance-approved measurement method. If the project cannot be summarised as "from X to Y, by when, measured by whom," it is not a use case yet — it is a budget waiting for a vendor.

Should enterprises build, buy, or partner for AI capability?

Most GCC enterprises should partner-first for the first three AI use cases, buy commodity capability where AI is a feature inside an existing workflow, and build only when the AI capability is core competitive IP. Partner-first closes the talent gap fastest and produces measurable outcomes inside 90 days, while build typically requires 18 to 36 months and carries high talent-retention risk.